If your growth dashboard still treats “visibility” as rankings + clicks, you are flying blind.

In 2026, the center of gravity has shifted from “ranking pages” to “being used in answers.” Google’s AI Overviews and AI Mode, Microsoft Copilot, and chat-style answer engines increasingly satisfy intent on the results page or inside a conversational interface. They may still send traffic, but they also produce something new and board-relevant: brand exposure without a click.

This is not an “SEO is dead” argument. Google explicitly says foundational SEO best practices remain relevant for AI features like AI Overviews and AI Mode. The change is that classic SEO is now the first layer of a larger visibility system. B2B teams need a second layer of strategy and measurement focused on answer surfaces, where citations, mentions, and source selection often matter before (and sometimes instead of) clicks.

Consider this a strategy memo for CEOs and CMOs: what AEO and GEO actually mean, why the shift became urgent, and what to measure and operationalize in the next 30 days.

- AEO and GEO are not the same thing (but they overlap)

- Why this became urgent in 2026

- The new KPI stack for B2B visibility

- What the current evidence says (and what it does not)

- A practical AEO/GEO operating model for B2B teams

- What to do in the next 30 days (without chasing hype)

- The white spaces smart B2B brands should own now

- What is overdone (and what to stop posting)

- Final takeaway

- FAQ

- Sources

AEO and GEO are not the same thing (but they overlap)

What AEO means in practical terms

Answer Engine Optimization (AEO) is about making your content extractable and usable by answer systems.

Think “be the answer,” not “win the blue link.”

In practice, AEO is a discipline of:

- Writing directly to questions and tasks (definition, steps, comparison criteria, pros and cons)

- Structuring pages so key passages can be lifted accurately (tight headings, crisp summaries, scannable tables)

- Removing ambiguity (consistent entity naming, explicit definitions, clear claims with evidence)

- Ensuring technical eligibility (indexing, snippet eligibility, accessibility, fast rendering)

Google’s own guidance is blunt: there are no special optimizations required to appear in AI Overviews or AI Mode beyond being indexed and eligible for a snippet, and SEO fundamentals still matter. That is AEO in a sentence: make your content easy to retrieve, understand, and quote correctly.

What GEO means in practical terms

Generative Engine Optimization (GEO) is about getting selected, cited, and trusted inside generative answers.

If AEO is “can the machine extract a correct answer from you,” GEO is “will the system choose you as a source when multiple options exist.”

Microsoft’s framing is useful here: it distinguishes AEO as clarity (AI can interpret accurate data) and GEO as credibility (authority signals that drive recommendations).

In practice, GEO expands the playing field:

- Your site competes with third-party sources (analyst reports, industry publications, documentation sites, forums, partners)

- Mentions may matter even when a link is absent

- Freshness, reputation, and corroboration influence selection

- Different engines behave differently in source selection and query handling

A simple way to internalize GEO: you are optimizing for source selection economics, not just rankings.

Why this distinction matters to B2B teams

For B2B, the consequences are concrete:

- AEO supports extraction. It improves your odds of being interpreted correctly and surfaced when your page is retrieved.

- GEO adds the messy part. It introduces cross-engine behavior, citation visibility, and competitive “who gets referenced” dynamics.

And the executive punchline:

You can “win SEO” and still lose mindshare inside AI answers.

Why this became urgent in 2026

The interface changed

Search is no longer only a SERP.

Google AI Overviews and AI Mode are designed to support follow-up conversation and deeper exploration. Google’s Search Central documentation notes that both AI Overviews and AI Mode can use “query fan-out,” issuing multiple related searches across subtopics and data sources to develop a response.

Google’s product updates in early 2026 reinforced this direction: AI Overviews and AI Mode were positioned as a more seamless conversational flow, with follow-up questions moving users from an overview into AI Mode.

For B2B discovery, that means:

- More multi-step journeys happen inside the interface

- Users compare vendors, approaches, and categories without ever opening 10 tabs

- The winning “unit” is often a cited recommendation, not a clicked listing

Measurement changed

Two developments matter for leadership reporting:

- Google folds AI feature visibility into Search Console’s core reporting. Google states that sites appearing in AI features (AI Overviews and AI Mode) are included in overall Search Console traffic and reported within the Performance report under the “Web” search type.

- Microsoft started surfacing citation telemetry directly. In February 2026, Bing Webmaster Tools introduced an “AI Performance” dashboard in public preview that reports how often your content is cited across Microsoft Copilot, AI-generated summaries in Bing, and partner integrations, including “Total Citations,” “Grounding queries,” and page-level citation activity.

This is the measurement inflection point: one major ecosystem is still largely click-centric in reporting, while another is explicitly building citation-level visibility.

Community behavior changed

If you want a quick reality check on where the market is moving, look at what the practitioner ecosystem is measuring and debating:

- CTR erosion and traffic displacement linked to AI Overviews

- Citations, mentions, and “share of answers” as new competitive territory

- The credibility layer: why authority and third-party references are showing up in outputs

Multiple industry studies and analyses have highlighted meaningful CTR and click declines on queries that trigger AI Overviews. For example, Seer Interactive’s reporting (covered by Search Engine Land) points to steep CTR drops for informational queries with AI Overviews. And Ahrefs has published updated analysis suggesting large click reductions associated with AI Overviews.

Meanwhile, Google has also been iterating on link presentation inside AI answers, including efforts to make sources more prominent and easier to access. That is a signal that the “citation layer” is now a first-class product problem, not an edge-case SEO issue.

The new KPI stack for B2B visibility

The old KPI stack (what most teams still report)

Most leadership dashboards still emphasize:

- Rankings

- Organic sessions

- CTR

- Leads from organic

These are still necessary. They are just incomplete.

The new KPI stack (add, do not replace)

To lead in 2026, add an “answer visibility” layer to the stack:

1) Citations and mentions in AI answers

Track whether your domain is cited, how often, and for which query themes. Microsoft’s AI Performance report is an early example of first-party citation telemetry, including grounding queries and page-level citation activity.

2) Share of visibility across answer engines

Not “share of voice” in the SERP. Share of citations and brand mentions across:

- Google AI Overviews / AI Mode

- Copilot / Bing AI summaries

- Chat assistants and answer engines relevant to your buyers

3) Brand search lift and branded demand capture

If buyers repeatedly see your brand in answers, your branded search volume often rises even if direct click-through falls. This is one of the cleanest ways to connect “being mentioned” to demand.

4) Assisted conversions and pipeline influence

Treat AI answers as upper and mid-funnel assists. Tie visibility to:

- Return visits

- Direct traffic lifts

- Demo page assists

- Multi-touch attribution events

5) Direct traffic and demo assists

If AI answers reduce top-of-funnel clicks, you may see a relative shift toward direct and branded navigation when intent matures.

The key leadership mindset shift

Two statements can be true at once:

- Visibility can rise while clicks fall.

- “Included in answers” is not the same as “winning revenue.”

Your job is to build a measurement system that keeps both in view: answer visibility metrics plus pipeline outcomes.

What the current evidence says (and what it does not)

What is becoming clear

AI answer visibility is measurable enough to manage.

Microsoft’s AI Performance launch is a major signal: citation metrics are now becoming a standard category of webmaster reporting.

Generative systems can behave differently than classic search.

Google’s query fan-out approach means AI features may issue multiple related searches to build an answer, potentially retrieving a broader set of supporting pages than a single classic query.

Links and citations are being actively redesigned.

Google’s ongoing changes to source link visibility inside AI Overviews and AI Mode highlight how central citations have become to the product experience.

What is still noisy

Traffic impact varies by query type and intent.

Informational queries are often more vulnerable to click displacement than high-intent, high-complexity tasks. Studies show large average effects, but your category mix matters.

Many viral takes are anecdotal.

“AI killed SEO” posts frequently generalize from a narrow dataset or a single site. Leadership should demand segmentation: by intent, by funnel stage, by query class.

A balanced operating assumption

Operate as if:

- Organic traffic is more volatile than it was in 2022 to 2024

- Answer visibility is becoming a durable competitive surface

- The only defensible KPI is pipeline impact, not impressions alone

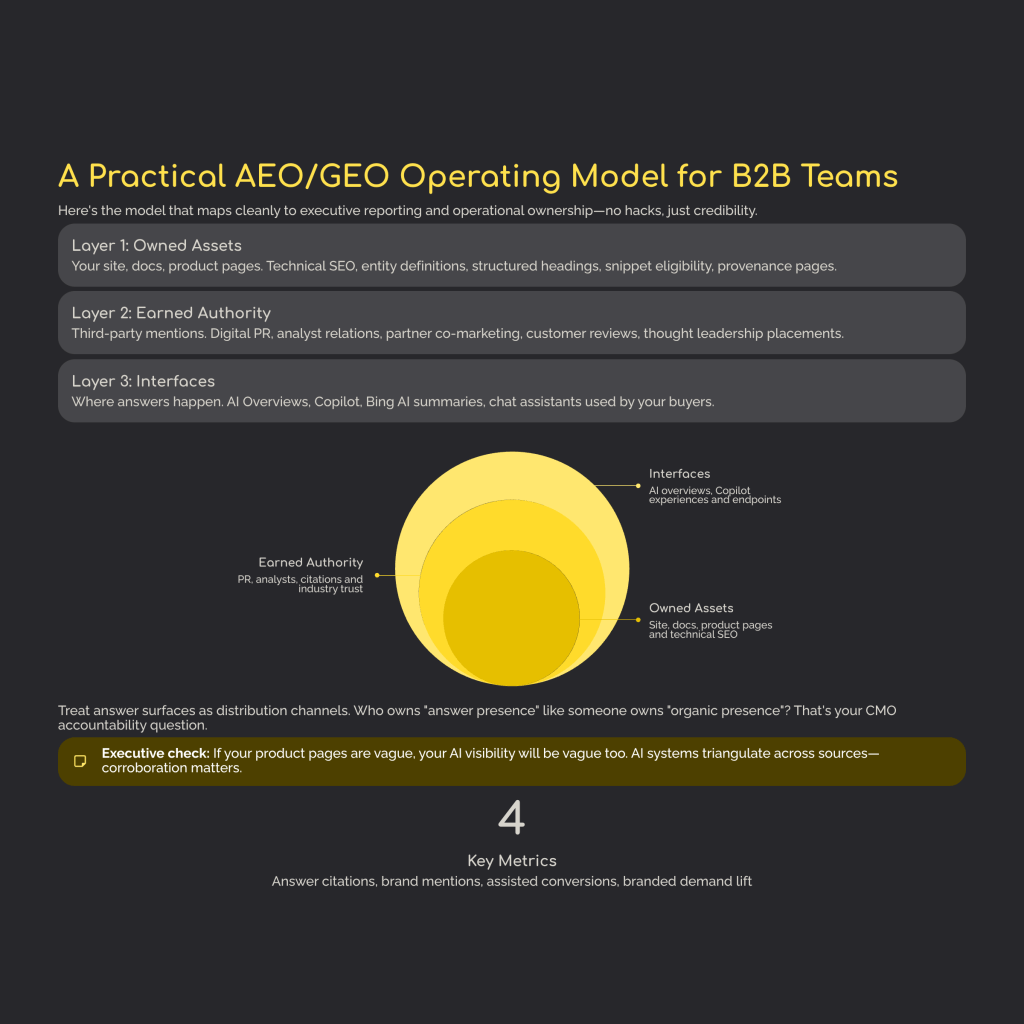

A practical AEO/GEO operating model for B2B teams

Here is the model that maps cleanly to executive reporting and operational ownership.

Layer 1: Owned assets (your site, docs, product pages)

This is still the foundation.

Focus areas:

- Technical SEO and indexability: crawlability, canonicalization, fast rendering, clean internal linking

- Clear entity definitions: consistent product naming, category naming, and terminology

- Structured headings and metadata: pages that read like a well-organized memo

- Snippet eligibility and content clarity: short definitions, step-by-step sections, precise comparisons

- Provenance pages: About, contact, editorial standards, and clear authorship to reinforce trust signals

Google’s AI features documentation reinforces that eligibility for AI Overviews and AI Mode depends on being indexed and eligible for a snippet, with no additional technical requirements.

Executive check: If your product pages are vague, your AI visibility will be vague too.

Layer 2: Earned authority (third-party mentions)

This is where many B2B teams are underinvested relative to the new environment.

Earned authority includes:

- Digital PR in credible industry publications

- Analyst relations and category reports

- Partner co-marketing and integration directories

- Customer reviews and high-trust marketplaces

- Thought leadership placements and podcast citations

Why this matters: AI systems frequently triangulate across sources. If your brand is only strong on your own domain, you are easier to ignore than a competitor with corroboration across the open web.

White-hat principles apply: prioritize real editorial mentions, link reclamation, broken link building, data-driven assets, and healthy anchor text ratios (keep exact match restrained, emphasize branded and natural anchors).

Layer 3: Interfaces (where answers happen)

Treat answer surfaces as distribution channels with their own measurement rules:

- Google AI Overviews / AI Mode (conversational journeys, query fan-out)

- Copilot and Bing AI summaries (increasingly measurable via citation telemetry)

- Chat assistants / answer engines used by your buyers (category and region dependent)

Ownership question for the CMO: who is accountable for “answer presence” the same way someone is accountable for “organic presence”?

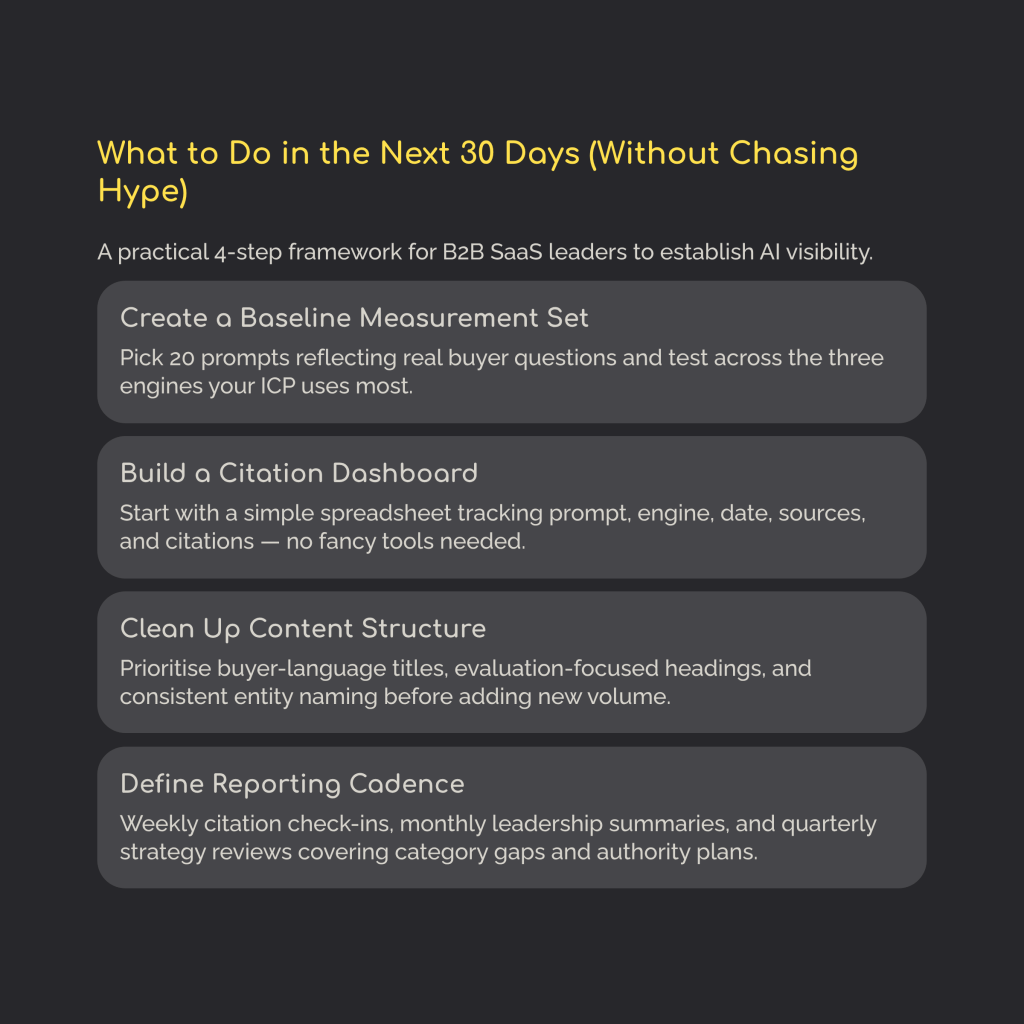

What to do in the next 30 days (without chasing hype)

1) Create a baseline measurement set

Pick 20 prompts that reflect real buyer questions across funnel stages:

- 5 top-of-funnel: definitions, problem framing

- 10 mid-funnel: comparisons, requirements, risks

- 5 bottom-of-funnel: vendor selection, implementation, pricing models (where appropriate)

Test across three engines you actually care about (example: Google AI Overviews/AI Mode, Copilot, and one additional assistant used by your ICP).

Track:

- Whether you are cited (Y/N)

- Position and prominence (top citation vs buried)

- Which competitors are cited

- Overlap across engines

- Any recurring third-party sources

Google’s query fan-out approach increases the chance that adjacent subtopics and supporting pages influence what gets shown, so prompt selection should include both primary and adjacent topics.

2) Build a citation dashboard (manual first is okay)

Do not wait for a tool.

A simple sheet is enough:

- Prompt

- Engine

- Date

- Cited sources

- Your domain cited? (Y/N)

- Competitor cited? (Y/N)

- Query intent

- Downstream action observed (brand search, direct visit, demo assist)

If you are in Microsoft’s ecosystem, monitor Bing Webmaster Tools AI Performance for a first-party view of citations and grounding queries.

3) Clean up content structure before content volume

The fastest wins are often structural.

Prioritize:

- Titles that match buyer language

- Headings that mirror evaluation criteria

- Consistent entity naming

- Product page clarity (what it is, who it is for, key differentiators, proof)

- Updated About, contact, and editorial standards pages

Google emphasizes that there are no special schema tricks required to show in AI features and that SEO fundamentals still matter. So treat “clean structure” as a compounding asset, not a one-off task.

4) Define your AI visibility reporting cadence

Keep it simple:

- Weekly ops view: prompt set, citation changes, issues found, content fixes shipped

- Monthly leadership summary: share of citations, brand mention accuracy, assisted conversion signals

- Quarterly strategy review: category coverage gaps, earned authority plan, technical roadmap

The white spaces smart B2B brands should own now

Governance and risk

AI answers introduce new reputational risks:

- Misrepresentation of your product capabilities

- Attribution drift (your ideas repeated without your brand)

- Scam and impersonation vectors in search experiences

Recent reporting has highlighted consumer harms when AI summaries mislead, especially in sensitive categories, underscoring why accuracy and provenance matter.

For B2B, the governance play is:

- Publish with citability and safety in mind

- Make claims verifiable

- Keep critical pages current

- Build a rapid correction loop (docs updates, clarification posts, PR outreach where needed)

Economics and crawl policy

Every executive team will face choices about:

- Allow vs restrict crawler access

- Monetization and licensing considerations

- What content is “public knowledge” vs “gated value”

Google notes controls like nosnippet and noindex, and also references Google-Extended for limiting AI training and grounding in some systems.

This becomes a strategy question, not a technical one: what do you want AI systems to learn from, and what do you want to reserve for conversion moments?

End-to-end technical reality

AEO and GEO are not “just add schema.”

The real pipeline often looks like:

Retrieval → rerank → generation

Implications:

- Retrieval favors clear entities, accessible text, and strong topical coverage

- Reranking is influenced by authority signals and corroboration

- Generation rewards precise, quotable passages with low ambiguity

Google’s documentation explicitly references query fan-out and the use of multiple related searches to develop responses, which increases the importance of both topical breadth and supporting pages.

What is overdone (and what to stop posting)

If your team is trying to lead a category, stop wasting cycles on:

- “SEO is dead” doom hooks

- Generic AEO/GEO checklists with no measurement loop

- Terminology debates with no reporting

- Tool screenshots without business context

Executives do not need more jargon. They need an evidence loop: baseline, experiments, metrics, pipeline impact.

Final takeaway

Keep the SEO fundamentals. Google still expects them, and AI features still depend on basic eligibility and helpful content.

Then add the missing layer:

- Measure AI visibility directly (citations, mentions, share across engines)

- Build the Visibility Stack (Owned + Earned + Interfaces)

- Report business outcomes (assists and pipeline influence, not impressions alone)

If you do that, you stop reacting to hype and start managing visibility as a modern growth system.

FAQ

Is AEO just SEO with a new name?

Not exactly. AEO overlaps heavily with good SEO and good content design, but it emphasizes extractability and answer-ready structure. In practice, many “AEO wins” come from making content clearer and easier to quote correctly, not from new technical tricks.

What is the difference between AEO and GEO?

AEO is about being extractable and answerable. GEO is about being selected, cited, and trusted inside generative answers. Microsoft summarizes it as clarity (AEO) vs credibility (GEO).

How do I measure AI visibility if traffic is falling?

Start with a manual prompt set and track citations and competitor presence. If you are in Bing’s ecosystem, use Bing Webmaster Tools AI Performance for citation telemetry and grounding queries. Then connect those visibility shifts to branded demand and assisted conversions.

Should B2B teams focus on citations or clicks?

Both, but not equally at every funnel stage. Citations and mentions can drive mindshare and demand creation. Clicks and conversions validate revenue capture. The leadership goal is to measure both and tie them to pipeline.

Do I need new tools to start GEO?

No. Start with manual tracking, a baseline set of prompts, and a reporting cadence. Tools help later, but they do not replace a sound operating model.

Sources

Google Search Central. AI features and your website. Google for Developers. Accessed February 23, 2026. https://developers.google.com/search/docs/appearance/ai-features

Bing Webmaster Blog. Introducing AI Performance in Bing Webmaster Tools Public Preview. Published February 10, 2026. Accessed February 23, 2026. https://blogs.bing.com/webmaster/February-2026/Introducing-AI-Performance-in-Bing-Webmaster-Tools-Public-Preview

Myers J. From Discovery to Influence: A Guide to AEO and GEO. Microsoft Advertising. Published January 6, 2026. Accessed February 23, 2026. https://about.ads.microsoft.com/en/blog/post/january-2026/from-discovery-to-influence-a-guide-to-aeo-and-geo

Stein R. Just ask anything: a seamless new Search experience. Google Blog. Published January 27, 2026. Accessed February 23, 2026. https://blog.google/products-and-platforms/products/search/ai-mode-ai-overviews-updates/

Law R, Guan X. Update: AI Overviews Reduce Clicks by 58%. Ahrefs Blog. Published February 4, 2026. Accessed February 23, 2026. https://ahrefs.com/blog/ai-overviews-reduce-clicks-update/

Goodwin D. Google AI Overviews drive 61% drop in organic CTR, 68% in paid. Search Engine Land. Published November 4, 2025. Accessed February 23, 2026. https://searchengineland.com/google-ai-overviews-drive-drop-organic-paid-ctr-464212

Roth E. Google’s AI search results will make links more obvious. The Verge. Published February 17, 2026. Accessed February 23, 2026. https://www.theverge.com/tech/880475/google-ai-overviews-ai-mode-links-update

Gregory A. Google puts users at risk by downplaying health disclaimers under AI Overviews. The Guardian. Published February 16, 2026. Accessed February 23, 2026. https://www.theguardian.com/technology/2026/feb/16/google-puts-users-at-risk-downplaying-disclaimers-ai-overviews